Captain Narwhal’s choices in transmitting data over NKN

Imagine you are Captain Narwhal of the Millennium Falcon starship, and need to go from Earth to Endor. You have two choices:

- “Planet hopping”: go via moon to Mars, from Mars to Tatooin, through Hoth and Naboo, and then land on Endor. It takes a bit of time, but costs little gas and travels securely.

- “Galactic Express”: Head to the nearest Hyperspace terminal (on the moon), pay for a lot of gas, and boom, it zooms your spaceship straight to Endor in a few minutes.

Luckily, you also have these two choices when using NKN to send data from one client to another client. In the next two chapters, we will delve into the details of how they work, how they differ, and how they can best serve different types of applications over the NKN Network.

“Planet Hopping”: Secure & Fast

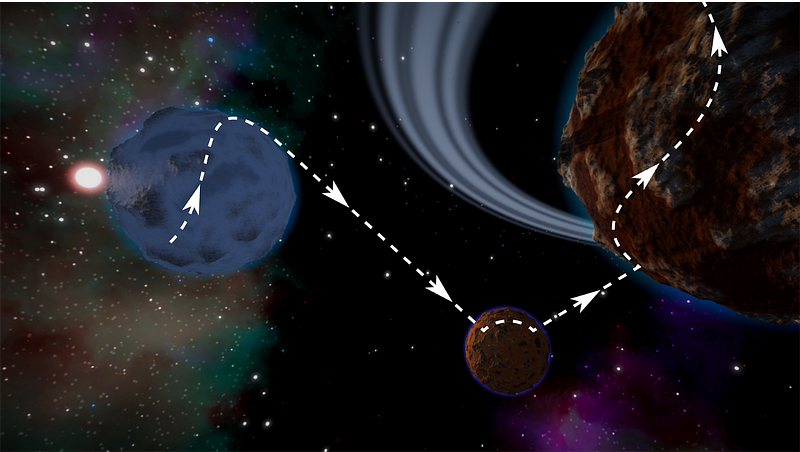

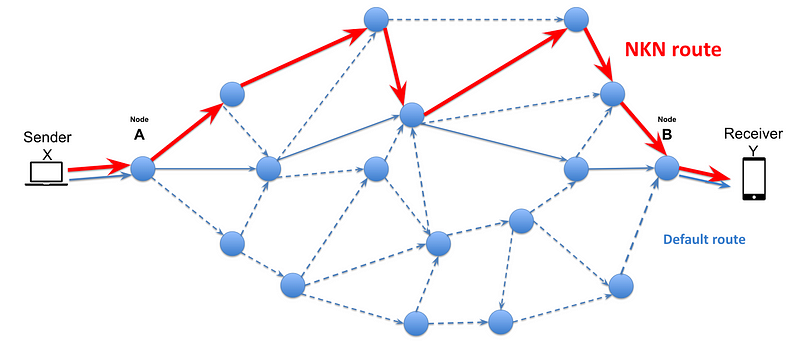

The “normal” way to route packets through NKN Network is using NKN’s significantly improved Chord Distributed Hash Table (DHT) algorithms. Chord DHT is secure and verifiable, meaning the choice of neighbors and route is verifiable given all the nodes addressed on the ring. The routing works such that:

- There is a virtual and very large ring

- All NKN nodes are given a random but verifiable NKN addresses and mapped onto the ring

- The sending node calculates the distance (clockwise on the ring) to find a neighbor closest to the destination address

- The selected Neighbor will relay the data to its neighbor closest to the receiver, etc, until the data reaches its destination.

A more detailed description of the algorithm can be found in “Stephen Wolfram (Creator of NKS) Tries to Understand NKN”:

https://forum.nkn.org/t/stephen-wolfram-creator-of-nks-tries-to-understand-nkn/185

In some cases, this mode of routing will add more hops and potentially more latency compared to the default route a packet will travel without NKN. There is a tradeoff between efficiency and security. The reason is that NKN’s mining reward is based on Proof of Relay, or how much data a node relays for the network. So in order to prevent collusion attacks, it is critical that nodes cannot manipulate neighbors nor routing.

But we have made two enhancements to the original Chord DHT algorithm to improve its efficiency without sacrificing the security and verifiability.

Latency optimized routing

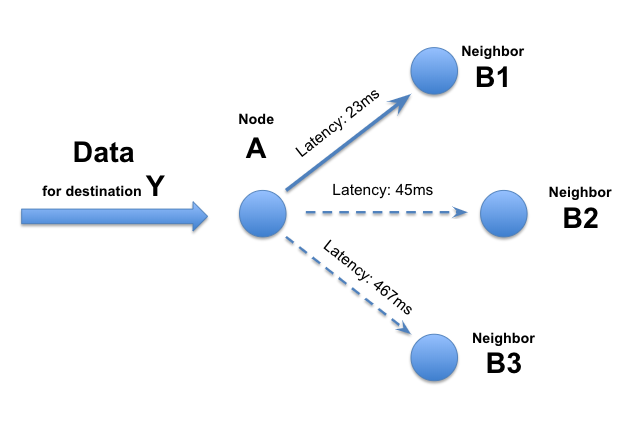

- Node A maintains latency information of all its neighbors (typically 50–100 neighbors for each node in current NKN implementation). This latency data is based on not only the physical networking latency, but also the protocol level processing latency. Thus the NKN latency metric is a good indicator of overall quality of each hop.

- When data arrives at Node A for destination of Y, Node A has a choice among 3 next hop neighbors (B1, B2, B3) to forward. This group of neighbors is determined by NKN algorithm and cannot be picked by node A itself.

- Node A picks neighbor with the lowest latency, Node B1 at 23ms, among the 3 proposed by the NKN algorithm. Node A relay the data to Node B1.

- All decisions are local, and decisions are made at each subsequent hop until the data reaches the final destination.

We have found that the default route in today’s Internet is far from optimal. By selecting the lowest NKN Latency route, we can often improve the end user’s Internet experience. But there is more.

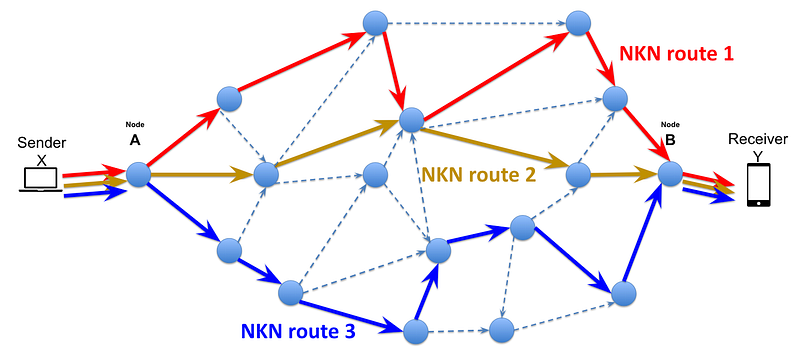

Concurrent multi-path routing

We can also combine multiple NKN routes for not only better latency, but also higher aggregated bandwidth. One good use case is large file transfer based on http/https and range request. The following diagram illustrates the concept of combining 3 NKN paths, but in reality our multi-client SDK supports any number of concurrent NKN routing paths. When one utilizes multiple concurrent path, he can either choose to minimize latency by sending more redundant data using different paths (e.g. nkn-multiclient: https://github.com/nknorg/nkn-multiclient-js), or choose to maximize throughput by sending different pieces of data using different paths (e.g. nkn-file-transfer: https://github.com/nknorg/nkn-file-transfer).

And the test results show significant improvements. In late 2018 we did a prototype of a web accelerator and achieved a 167% — 273% speed boost by using multi-path over our testnet. And the bigger the file, the better the boost.

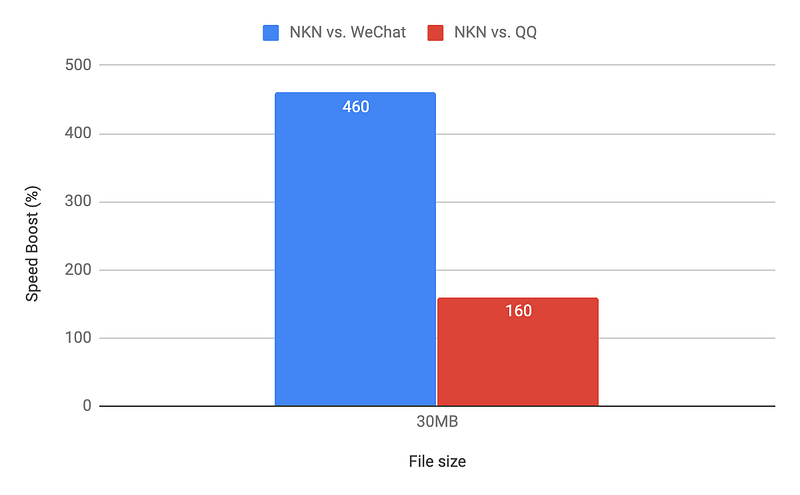

In August 2019, a recent test of large file transfers over NKN mainnet using nkn-file-transfer (https://github.com/nknorg/nkn-file-transfer) shows we are 460% the speed of WeChat and 160% of QQ. We used 8 concurrent (randomized and secure) paths over NKN Mainnet, between PC clients in China and in California.

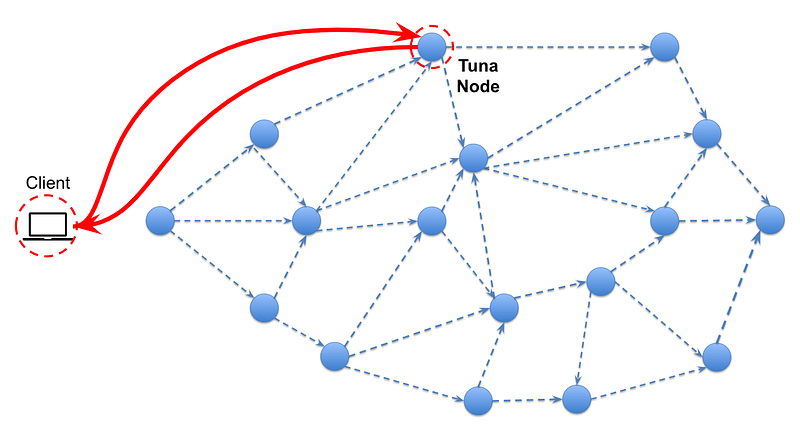

“Galactic Express”: Direct & Faster

But what if you want an even faster experience? As fast as a Hyperspace jump? And willing to pay gas for it?

Yes you definitely can with the NKN network,but with a different mode of operation as illustrated in the examples below. This mode is specifically designed for applications that require the most direct and fastest service possible. The key is to connect to a single NKN Node which offers a direct route to the destination. In this case, the client pays the node directly as the traffic does not go through NKN’s consensus and therefore does not have the opportunity to earn mining rewards for the traffic.

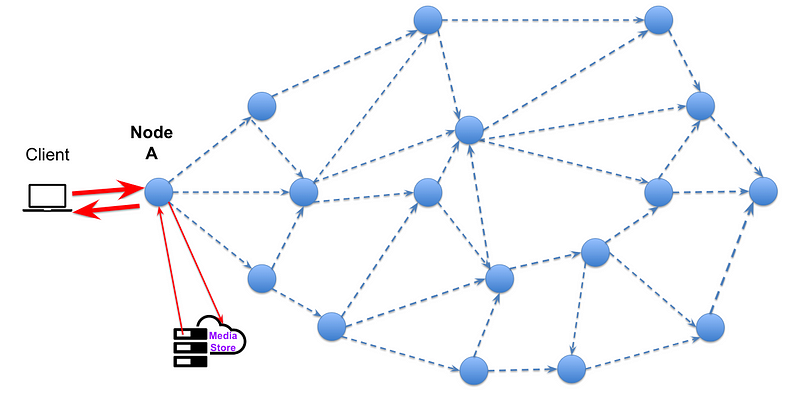

App 1: nCDN for content delivery

- Client connects to Node A, based on several factors such as geographical proximity, throughput, latency, and performance.

- Node A is an nCDN edge node, that caches content based on client demands.

- When a client wants to stream the latest “Star Wars” Movie, Node A discovers it does not have this movie in local cache. So Node A will fetch a copy from the Media Store. This only needs to happen once.

- Node A will serve the local copy of “Star Wars” directly to Client.

- If there are other users in the same geographical region that also want to stream “Star Wars”, then Node A can serve them directly with a local copy.

- Node A will be paid according to the amount of content delivery service it provided.

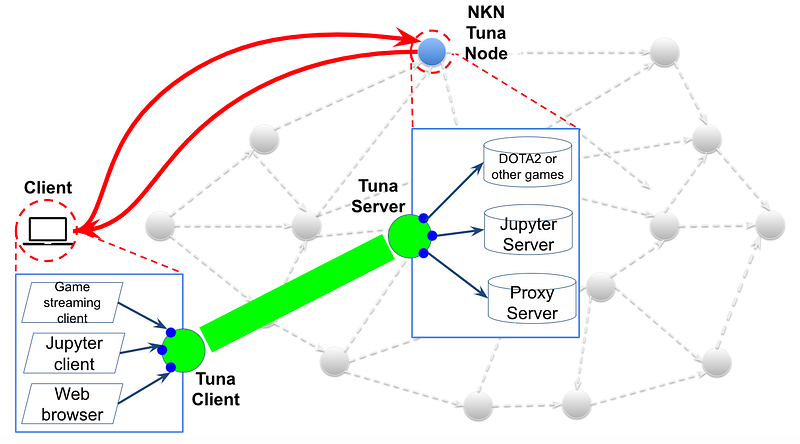

App 2: TUNA and universal tunneling

TUNA, short for Tunnel Using NKN for any Application, is a platform built on top of NKN that allows everyone to turn any network based application into a service and monetize it based on usage. Some concrete examples are:

- Play high quality games via streaming, such as DOTA2, on a remote high end server with performance GPU.

- Work on advanced machine learning algorithms using the Jupyter notebook, utilizing the idle computing power of a remote high performance multi-core server

- Accelerate access to a website using a remote web proxy or web accelerator

In order to fulfill the high bandwidth and low latency requirements of these applications, the client only needs to connect to the NKN TUNA server where such services are hosted. The TUNA client pays via NKN to the TUNA node, based on the usage of certain services. In this case, we do not go through the randomized routing of NKN’s “Normal mode” at all! So we can support services similar to the likes of the upcoming Google Stadia game streaming service.

If we dive one level deeper, you can see that TUNA acts as a multiplexer between many different applications to utilize the same tunnel between the client and the NKN TUNA node. So all the client side applications talk to the server side as if both are residing on the same computer. No modification to the applications at all, which is the beauty and main attraction of TUNA solution.

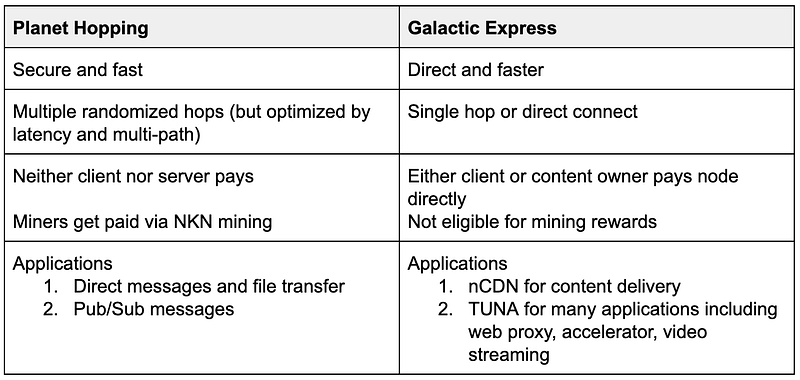

Summary

So Captain Narwhal’s choices are clear depending on the type of application you are using over NKN’s network., A simple side-by-side comparison is provided below:

Captain Narwhal, may the force be with you and happy sailing across the vast NKN universe!

About NKN

NKN is the new kind of P2P network connectivity protocol & ecosystem powered by a novel public blockchain. We use economic incentives to motivate Internet users to share network connection and utilize unused bandwidth. NKN’s open, efficient, and robust networking infrastructure enables application developers to build the decentralized Internet so everyone can enjoy secure, low cost, and universally accessible connectivity.

Home: https://nkn.org/

Email: contact@nkn.org

Telegram: https://t.me/nknorg

Twitter: https://twitter.com/NKN_ORG

Forum: https://forum.nkn.org

Medium: https://medium.com/nknetwork

Linkedin: https://www.linkedin.com/company/nknetwork/

Github: https://github.com/nknorg

Discord: https://discord.gg/yVCWmkC 1

YouTube: http://www.youtube.com/c/NKNORG